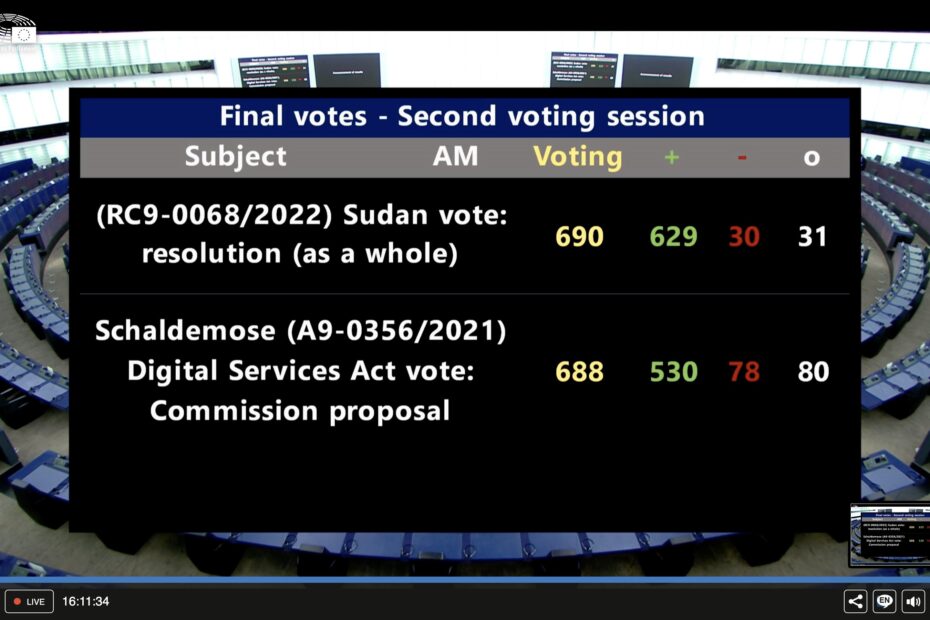

Yesterday the European Parliament adopted its negotiation position on the EU’s new content moderation rules, the so-called Digital Services Act. The version of the text prepared by the Committee on Internal Market and Consumer Protection (IMCO) was mostly adopted, but a few amendments were added.

As we already compared the committee’s version to the European Commission’s proposal and the Council’s negotiating mandate in December, this post simply highlights the chosen amendments added during the plenary vote.

1. No outright ban on targeted advertising, but many restrictions

An outright ban on targeted advertising that had been discussed in the committees was not up for vote. But a majority of MEPs supported three amendments on the topic (AMs 498, 499, 500). The tabled text already prohibited using personal data in targeting minors and “dark patterns”, but the plenary vote added two new aspects:

- No consent walls: If a user refuses to give consent to cookies or to being tracked, shall still be able to access the service. (see bold text of AM 498 & AM 499)

- Prohibition of targeting using sensitive data: Based on AM 500 (see bold text), targeted advertising using personal data such as racial or ethnic origin, political opinions, religious or philosophical beliefs, or trade union membership will not be allowed.

| AM 498 | AM 499 | AM 500 |

| (52 a) Refusing consent in processing personal data for the purposes of advertising should not result in access to the functionalities of the platform being disabled. Alternative access options should be fair and reasonable both for regular and for one-time users, such as options based on tracking-free advertising. Targeting individuals on the basis of special categories of data which allow for targeting vulnerable groups should not be permitted. | 24. (new) Online platforms shall ensure that recipients of services can easily make an informed choice on whether to consent, as defined in Article 4 (11) and Article 7 of Regulation (EU) 2016/679, in processing their personal data for the purposes of advertising by providing them with meaningful information, including information about how their data will be monetised. Online platforms shall ensure that refusing consent shall be no more difficult or time-consuming to the recipient than giving consent. In the event that recipients refuse to consent, or have withdrawn consent, recipients shall be given other fair and reasonable options to access the online platform. | 24. (new) Targeting or amplification techniques that process, reveal or infer personal data of minors or personal data referred to in Article 9(1) of Regulation (EU) 2016/679 for the purpose of displaying advertisement are prohibited. |

2. No “Media Exemption”

This was the perhaps most discussed issue ahead of the plenary vote. Should registered media outlets be exempt from platform moderation rules or put under a special regime? In the end these proposals didn’t make it and instead a softer requirement for platforms to consider media freedom and pluralism in their terms of services was added.

| AM 513 |

| Providers of intermediary services shall use fair, non-discriminatory and transparent terms and conditions. Providers of intermediary services shall draft those terms and conditions in clear, plain user friendly, and unambiguous language and shall make them publicly available in an easily accessible and machine-readable format in the languages of the Member State towards which the service is directed. In their terms and conditions, providers of intermediary services shall respect the freedom of expression, freedom and pluralism of the media, and other fundamental rights and freedoms, as enshrined in the Charter as well as the rules applicable to the media in the Union. |

3. Anonymous Use of Digital Services

A majority of 393 “for” to 284 “against” of members supported the right to use online services anonymously. Which in practice would mean that the use of and payment for a service should be possible without collecting personal data. Even if this amendment doesn’t survive the trilogue negotiations, it is an important sign that a majority of the house is against a “real name obligation” online, which regularly gets proposed by ministers of the interior of various member states.

| AM 520 |

| Art. 7(new) 1c. Without prejudice to Regulation (EU) 2016/679 and Directive 2002/58/EC, providers shall make reasonable efforts to enable the use of and payment for that service without collecting personal data of the recipient. |

4. Noteworthy Rejections

The House rejected a proposal (AM 493) to have a “must carry” provision for content provided by politicians (e.g. can Twitter delete a politician’s account?).

It rejected an “immediate liability” provision proposal, meaning that platforms will be able to leave content up as long as they assess its legality. (AM 207).

A provision obliging platforms to provide at least one recommender system that is easy to select and isn’t based on profiling also made it in the final negotiating mandate, despite being challenged.

Trilogues Begin

The first negotiations between the Council and the Parliament are expected to take place before the end of this month. Considering that the two versions have many things in common, a quick adoption is not out of the question. Considerable divergences exist in two aspects:

- Which authority will have the oversight over which platforms. The EPs text leaves it up to the national regulators, the Council wants the Commission to be responsible for very large platforms at least.

- Track-free advertisement and services provisions: The Parliament added a number of restrictions, while the Council has not really touched upon this topic. It will be interesting to see which provisions make it through to the final version.