Wikipedia will be harmed by France’s proposed SREN bill: Legislators should avoid unintended consequences

Written by Jan Gerlach, Director of Public Policy at the Wikimedia Foundation; Phil Bradley-Schmieg, Lead Counsel at the Wikimedia Foundation; and, Michele Failla, Senior EU Policy Specialist at Wikimedia Europe

(Wikimédia France, the French national Wikimedia chapter, has also published a blog post on the SREN bill)

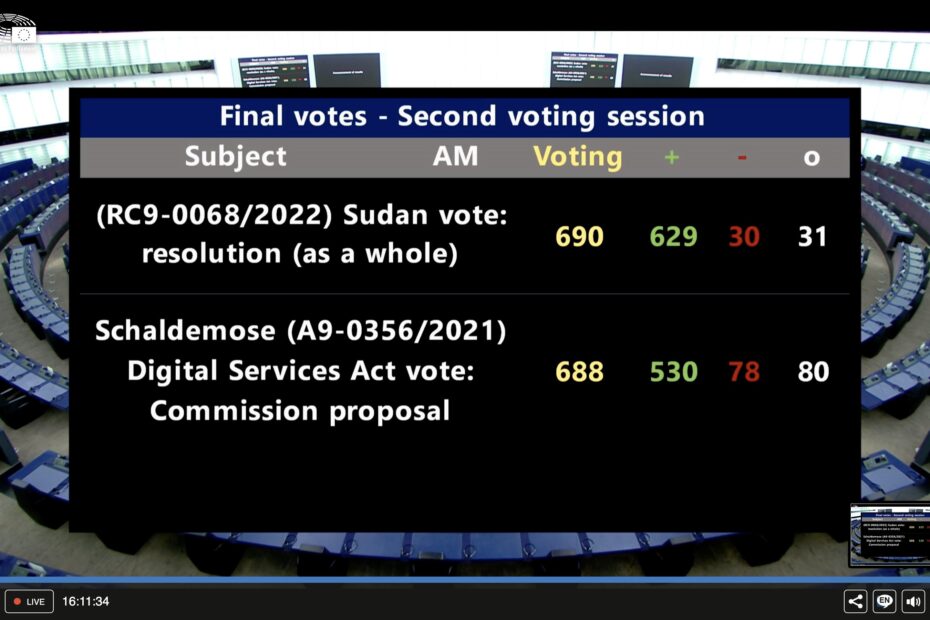

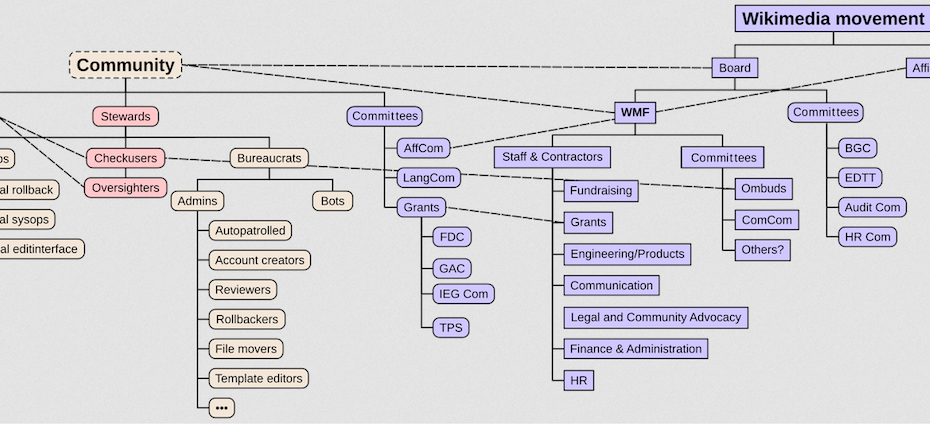

The French legislature is currently working on a bill that aims at securing and regulating digital space (widely known by its acronym, SREN). As currently drafted, the bill not only threatens Wikipedia’s community-led model of decentralized collaboration and decision-making, it also contradicts the EU’s data protection rules and its new content moderation law, the Digital Services Act (DSA). For these reasons, the Wikimedia Foundation and Wikimedia Europe call on French lawmakers to amend the SREN bill in order to make sure that public interest projects like Wikipedia are protected and can continue to flourish.

Read More »Wikipedia will be harmed by France’s proposed SREN bill: Legislators should avoid unintended consequences