Analysis

In this installment of series of longer features on our blog we analyse the scope of the AI Act as proposed by the European Commission and assess it adequacy in the context of impact of AI in practice.

AI is going to shape the Internet more and more and through it access to information and production of knowledge. Wikipedia, Wikimedia Commons and Wikidata are supported by machine learning tools and their role will grow in the following years. We are following the proposal for the Artificial Intelligence Act that, as the first global attempt to legally regulate AI, will have consequences for our projects, our communities and users around the world. What are we really talking about when we speak of AI? And how much of it do we need to regulate?

The devil is in the definition

It is indispensable to define the scope of any matter to be regulated, and in the case of AI that task is no less difficult than for “terrorist content” for example. There are different approaches as to what AI is taken in various debates, from scientific ones to popular public perceptions. When hearing “AI”, some people think of sophisticated algorithms – sometimes inside an android – undertaking complex, conceptual and abstract tasks or even featuring a form of self-consciousness. Some include in the definition algorithms that modify their operations based on comparisons between and against large amounts of data for example, without any abstract extrapolation.

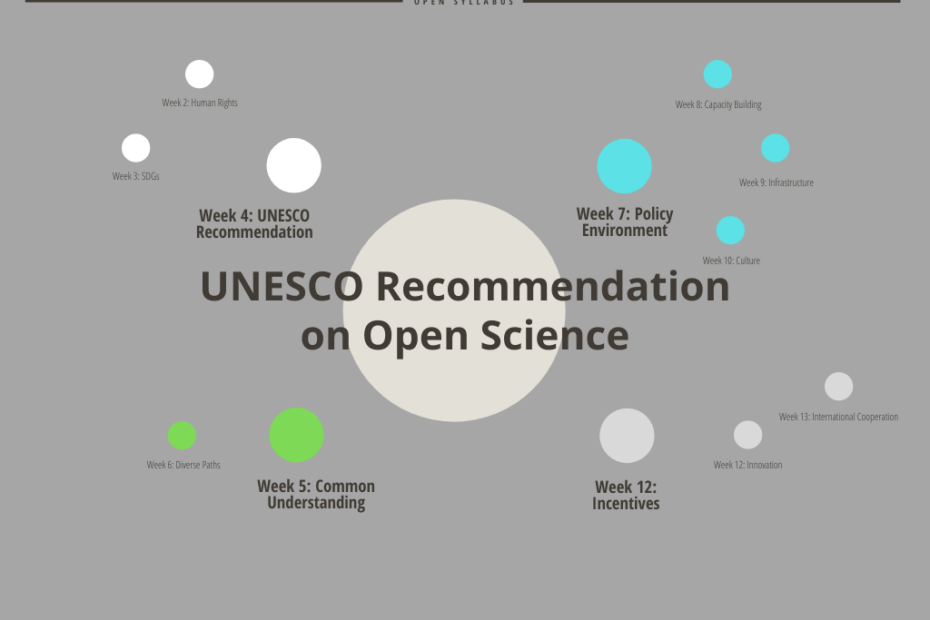

The definition proposed by the European Commission in the AI Act lists software developed with specifically named techniques; among them machine learning approaches including deep learning, logic- and knowledge-based approaches, as well as statistical approaches including Bayesian estimation, search and optimization methods. The list is quite broad and it clearly encompasses a range of technologies used today by companies, internet platforms and public institutions alike.

Read More »Artificial Intelligence Act: what is the European Union regulating?